Earlier this month, a Facebook software engineer from Egypt wrote an open note to his colleagues with a warning: “Facebook is losing trust among Arab users.”

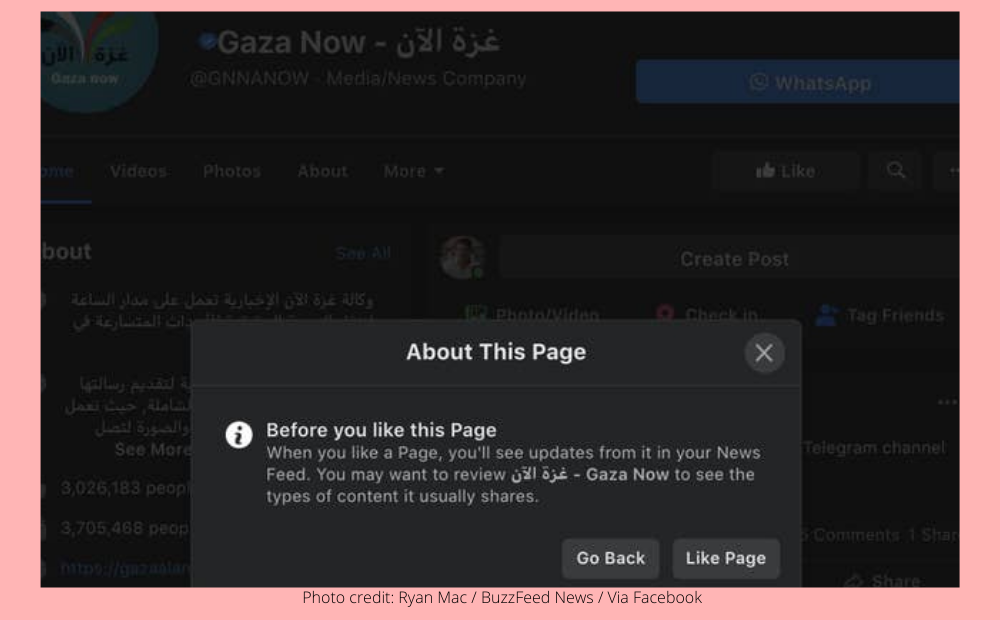

Facebook had been a “tremendous help” for activists who used it to communicate during the Arab Spring of 2011, he said, but during the ongoing Palestinian–Israeli conflict, censorship — either perceived or documented — had made Arab and Muslim users skeptical of the platform. As proof, the engineer included a screenshot of Gaza Now, a verified news outlet with nearly 4 million followers, which, when liked on Facebook, prompted a “discouraging” pop-up message stating, “You may want to review غزة الآن – Gaza Now to see the types of content it usually shares.”

“I made an experiment and tried liking as many Israeli news pages as possible, and ‘not a single time’ have I received a similar message,” the engineer wrote, suggesting that the company’s systems were prejudiced against Arabic content. “Are all of these incidents resulted from a model bias?”

Even after hitting the like button, Facebook users were asked if they were sure if they wanted to follow a page for Gaza Now, prompting one employee to ask if this was an example of anti-Arab bias.

The post prompted a cascade of comments from other colleagues. One asked why an Instagram post from actor Mark Ruffalo about Palestinian displacement had received a label warning of sensitive content. Another alleged that ads from Muslim organizations raising funds during Ramadan with “completely benign content” were suspended by Facebook’s artificial intelligence and human moderators.

“I fear we are at a point where the next mistake will be the straw that breaks the camel’s back and we could see our communities migrating to other platforms,” another Facebook worker wrote about the mistrust brewing among Arab and Muslim users.

While there is now a ceasefire between Israel and Hamas, Facebook must now deal with a sizable chunk of employees who have been arguing internally about whether the world’s largest social network is exhibiting anti-Muslim and anti-Arab bias. Some worry Facebook is selectively enforcing its moderation policies around related content, others believe it is over-enforcing them, and still others fear it may be biased toward one side or the other. One thing they share in common: the belief that Facebook is once again bungling enforcement decisions around a politically charged event.

While some perceived censorship across Facebook’s products has been attributed to bugs — including one that prevented users from posting Instagram stories about Palestinian displacement and other global events — others, including the blocking of Gaza-based journalists from WhatsApp and the forced following of millions of accounts on a Facebook page supporting Israel have not been explained by the company. Earlier this month, BuzzFeed News also reported that Instagram had mistakenly banned content about the Al-Aqsa Mosque, the site where Israeli soldiers clashed with worshippers during Ramadan, because the platform associated its name with a terrorist organization.

“It truly feels like an uphill battle trying to get the company at large to acknowledge and put in real effort instead of empty platitudes into addressing the real grievances of Arab and Muslim communities,” one employee wrote in an internal group for discussing human rights.

The situation has become so inflamed inside the company that a group of about 30 employees banded together earlier this month to file internal appeals to restore content on Facebook and Instagram that they believe was improperly blocked or removed.

“This is extremely important content to have on our platform and we have the impact that comes from social media showcasing the on-the-ground reality to the rest of the world,” one member of that group wrote to an internal forum. “People all over the world are depending on us to be their lens into what is going on around the world.”

The perception of bias against Arabs and Muslims is impacting the company’s brands as well. On both the Apple and Google mobile application stores, the Facebook and Instagram apps have been recently flooded with negative ratings, inspired by declines in user trust due to “recent escalations between Israel and Palestine,” according to one internal post.

In a move first reported by NBC News, some employees reached out to both Apple and Google to attempt to remove the negative reviews.

“We are responding to people’s protests about censoring with more censoring? That is the root cause right here,” one person wrote in response to the post.

“This is the result of years and years of implementing policies that just don’t scale globally,” they continued. “As an example, by internal definitions, sizable portions of some populations are considered terrorists. A natural consequence is that our manual enforcement systems and automations are biased.”

Facebook spokesperson Andy Stone acknowledged that the company had made mistakes and noted that the company has a team on the ground with Arabic and Hebrew speakers to monitor the situation.

“We know there have been several issues that have impacted people’s ability to share on our apps,” he said in a statement. “While we have fixed them, they should never have happened in the first place and we’re sorry to anyone who felt they couldn’t bring attention to important events, or who felt this was a deliberate suppression of their voice. This was never our intention — nor do we ever want to silence a particular community or point of view.”

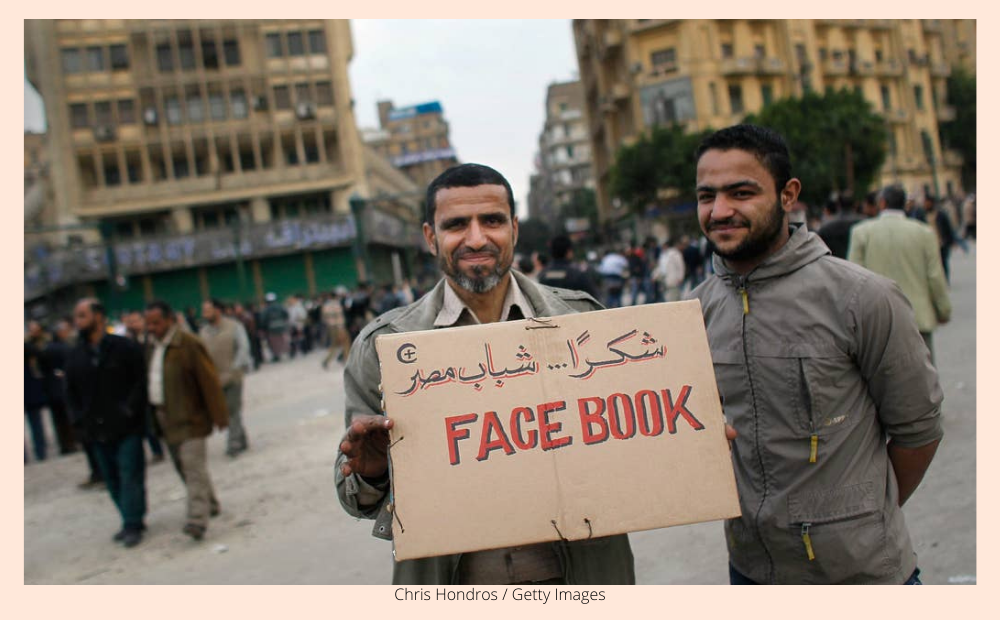

Anti-government protesters in Cairo hold a sign referencing Facebook, which was instrumental in organizing protesters in Tahrir Square, on Feb. 4, 2011.

Social media companies including Facebook have long cited their use during the 2011 uprisings against repressive Middle Eastern regimes, popularly known as the Arab Spring, as evidence that their platforms democratized information. Mai ElMahdy, a former Facebook employee who worked on content moderation and crisis management from 2012 to 2017, said the social network’s role in the revolutionary movements was a main reason why she joined the company.

“I was in Egypt back in the time when the revolution happened, and I saw how Facebook was a major tool for us to use to mobilize,” she said. “Up until now, whenever they want to brag about something in the region, they always mention Arab Spring.”

Her time at the company, however, soured her views on Facebook and Instagram. While she oversaw the training of content moderators in the Middle East from her post in Dublin, she criticized the company for being “US-centric” and failing to hire enough people with management expertise in the region.

“I remember that one person mentioned in a meeting, maybe we should remove content that says ‘Allahu akbar’ because that might be related to terrorism,” ElMahdy said of a meeting more than five years ago about a discussion of a Muslim religious term and exclamation that means “God is great.”

Stone said the phrase does not break Facebook’s rules.

Jillian C. York, the director of international freedom of expression for the Electronic Frontier Foundation, has studied content moderation within the world’s largest social network and said that the company’s approach to enforcement around content about Palestinians has always been haphazard. In her book Silicon Values: The Future of Free Speech Under Surveillance Capitalism, she notes that the company’s mishaps — including the blocking of accounts of journalists and a political party account in the West Bank — had led users to popularize a hashtag, #FBCensorsPalestine.

“I do agree that it may be worse now just because of the conflict, as well as the pandemic and the subsequent increase in automation,” she said, noting how Facebook’s capacity to hire and train human moderators has been affected by COVID-19.

Ashraf Zeitoon, the company’s former head of policy for the Middle East and North Africa region; ElMahdy; and two other former Facebook employees with policy and moderation expertise also attributed the lack of sensitivity to Palestinian content to the political environment and lack of firewalls within the company. At Facebook, those handling government relations on the public policy team also weigh in on Facebook’s rules and what should or shouldn’t be allowed on the platform, creating possible conflicts of interest where lobbyists in charge of keeping governments happy can put pressure on how content is moderated.

That gave an advantage to Israel, said Zeitoon, where Facebook had dedicated more personnel and attention. When Facebook hired Jordana Cutler, a former adviser to Israeli Prime Minister Benjamin Netanyahu, to oversee public policy in a country of some 9 million people, Zeitoon, as head of public policy for the Middle East and North Africa, was responsible for the interests of more 220 million people across 25 Arab countries and regions, including Palestinian territories.

Facebook employees have raised concerns about Cutler’s role and whose interests she prioritizes. In a September interview with the Jerusalem Post, the paper identified her as “our woman at Facebook,” while Cutler noted that her job “is to represent Facebook to Israel, and represent Israel to Facebook.”

“We have meetings every week to talk about everything from spam to pornography to hate speech and bullying and violence, and how they relate to our community standards,” she said in the interview. “I represent Israel in these meetings. It’s very important for me to ensure that Israel and the Jewish community in the Diaspora have a voice at these meetings.”

Zeitoon, who recalls arguing with Culter over whether the West Bank should be considered “occupied territories” in Facebook’s rules, said he was “shocked” after seeing the interview. “At the end of the day, you’re an employee of Facebook, and not an employee of the Israeli government,” he said. (The United Nations defines the West Bank and the Gaza Strip as Israeli-occupied.)

Facebook’s dedication of resources to Israel shifted internal political dynamics, said Zeitoon and others. ElMahdy and another former member of Facebook’s community operations organization in Dublin claimed that Israeli members of the public policy team would often pressure their team on content takedown and policy decisions. There was no real counterpart that directly represented Palestinian interests during their time at Facebook, they said.

“The role of our public policy team around the world is to help make sure governments, regulators, and civil society understand Facebook’s policies, and that we at Facebook understand the context of the countries where we operate,” Stone, the company spokesperson, said. He noted that the company now has a policy team member “focused on Palestine and Jordan.”

Cutler did not respond to a request for comment.

ElMahdy specifically remembered discussions at the company about how the platform would handle mentions of “Zionism” and “Zionist” — phrases associated with the restablishment of a Jewish state — as proxies for “Judaism” and “Jew.” Like many mainstream social media platforms, Facebook’s rules afford special protections to mentions of “Jews” and other religious groups, allowing the company to remove hate speech that targets people because of their religion.

Members of the policy team, ElMahdy said, pushed for “Zionist” to be equated with “Jew,” and guidelines affording special protections to the term for settlers were eventually put into practice after she left in 2017. Earlier this month, the Intercept published Facebook’s internal rules to content moderators on how to handle the term “Zionist,” suggesting the company’s rules created an environment that could stifle debate and criticism of the Israeli settler movement.

In a statement, Facebook said it recognizes that the word “Zionist” is used in political debate.

“Under our current policies, we allow the term ‘Zionist’ in political discourse, but remove attacks against Zionists in specific circumstances, when there’s context to show it’s being used as a proxy for Jews or Israelis, which are protected characteristics under our hate speech policy,” Stone said.

Children hold Palestinian flags at the site of a house in Gaza that was destroyed by Israeli airstrikes on May 23, 2021.

As Facebook and Instagram users around the world complained that their content about Palestinians was blocked or removed, Facebook’s growth team assembled a document on May 17 to assess how the strife in Gaza affected user sentiment.

Among its findings, the team concluded that Israel, which had 5.8 million Facebook users, had been the top country in the world to report content under the company’s rules for terrorism, with nearly 155,000 complaints over the preceding week. It was third in flagging content under Facebook’s policies for violence and hate violations, outstripping more populous countries like the US, India, and Brazil, with about 550,000 total user reports in that same time period.

In an internal group for discussing human rights, one Facebook employee wondered if the requests from Israel had any impact on the company’s alleged overenforcement of Arabic and Muslim content. While Israel had a little more than twice the amount of Facebook users than Palestinian territories, people in the country had reported 10 times the amount of content under the platform’s rules on terrorism and more than eight times the amount of complaints for hate violations compared to Palestinian users, according to the employee.

“When I look at all of the above, it made me wonder,” they wrote, including a number of internal links and a 2016 news article about Facebook’s compliance with Israeli takedown requests, “are we ‘consistently, deliberately, and systematically silencing Palestinians voices?’”

For years, activists and civil society groups have wondered if pressure from the Israeli government through takedown requests has influenced content decision-making at Facebook. In its own report this month, the Arab Center for the Advancement of Social Media tracked 500 content takedowns across major social platforms during the conflict and suggested that “the efforts of the Israeli Ministry of Justice’s Cyber Unit — which over the past years submitted tens of thousands of cases to companies without any legal basis — is also behind many of these reported violations.”

“In line with our standard global process, when a government reports content that does not break our rules but is illegal in their country, after we conduct a legal review, we may restrict access to it locally,” Stone said. “We do not have a special process for Israel.”

As the external pressure has mounted, the informal team of about 30 Facebook employees filing internal complaints have attempted to triage a situation their leaders have yet to address publicly. As of last week, they had more than 80 appeals about content takedowns about the Israeli–Palestinian conflict and found that a “large majority of the decision reversals [were] because of false positives from our automated systems” specifically around the misclassification of hate speech. In other instances, videos and pictures about police and protesters had been mistakenly taken down because of “bullying/harassment.”

“This has been creating more distrust of our platform and reaffirming people’s concerns of censorship,” the engineer wrote.

It’s also affecting the minority of Palestinian and Palestinian American employees within the company. Earlier this week, an engineer who identified as “Palestinian American Muslim” wrote a post titled “A Plea for Palestine” asking their colleagues to understand that “standing up for Palestinians does not equate to Anti-semitism.”

“I feel like my community has been silenced in a societal censorship of sorts; and in not making my voice heard, I feel like I am complicit in this oppression,” they wrote. “Honestly, it took me a while to even put my thoughts into words because I genuinely fear that if i speak up about how i feel, or i try to spread awareness amongst my peers, I may receive an unfortunate response which is extremely disheartening.”

Though Facebook execs have since set up a special task force to expedite the appeals of content takedowns about the conflict, they seem satisfied with the company’s handling of Arabic and Muslim content during the escalating tension in the Middle East.

In an internal update issued last Friday, James Mitchell, a vice president who oversees content moderation, said that while there had been “reports and perception of systemic over-enforcement,” Facebook had “not identified any ongoing systemic issues.” He also noted that the company had been using terms and classifiers with “high-accuracy precision” to flag content for potential hate speech or incitement of violence, allowing them to automatically be removed.

He said his team was committed to doing a review to see what the company could do better in the future, but only acknowledged a single error, “incorrectly enforcing on content that included the phrase ‘Al Aqsa,’ which we fixed immediately.”

Internal documents seen by BuzzFeed News show that it was not immediate. A separate post from earlier in the month showed that over a period of at least five days, Facebook’s automated systems and moderators “deleted” some 470 posts that mentioned Al-Aqsa, attributing the removals to terrorism and hate speech.

Some employees were unsatisfied with Mitchell’s update.

“I also find it deeply troubling that we have high-accuracy precision classifiers and yet we just told ~2 billion Muslims that we confused their third holiest site, Al Aqsa, with a dangerous organization,” one employee wrote in reply to Mitchell.

“At best, it sends a message to this large group of our audience that we don’t care enough to get something so basic and important to them right,” they continued. “At worst, it helped reinforce the stereotype ‘Muslims are terrorists’ and the idea that free-speech is restricted for certain populations.”